November 5, 2025

· 4 min readTOON vs JSON: Supercharge Your LLM Prompts & Cut Token Costs

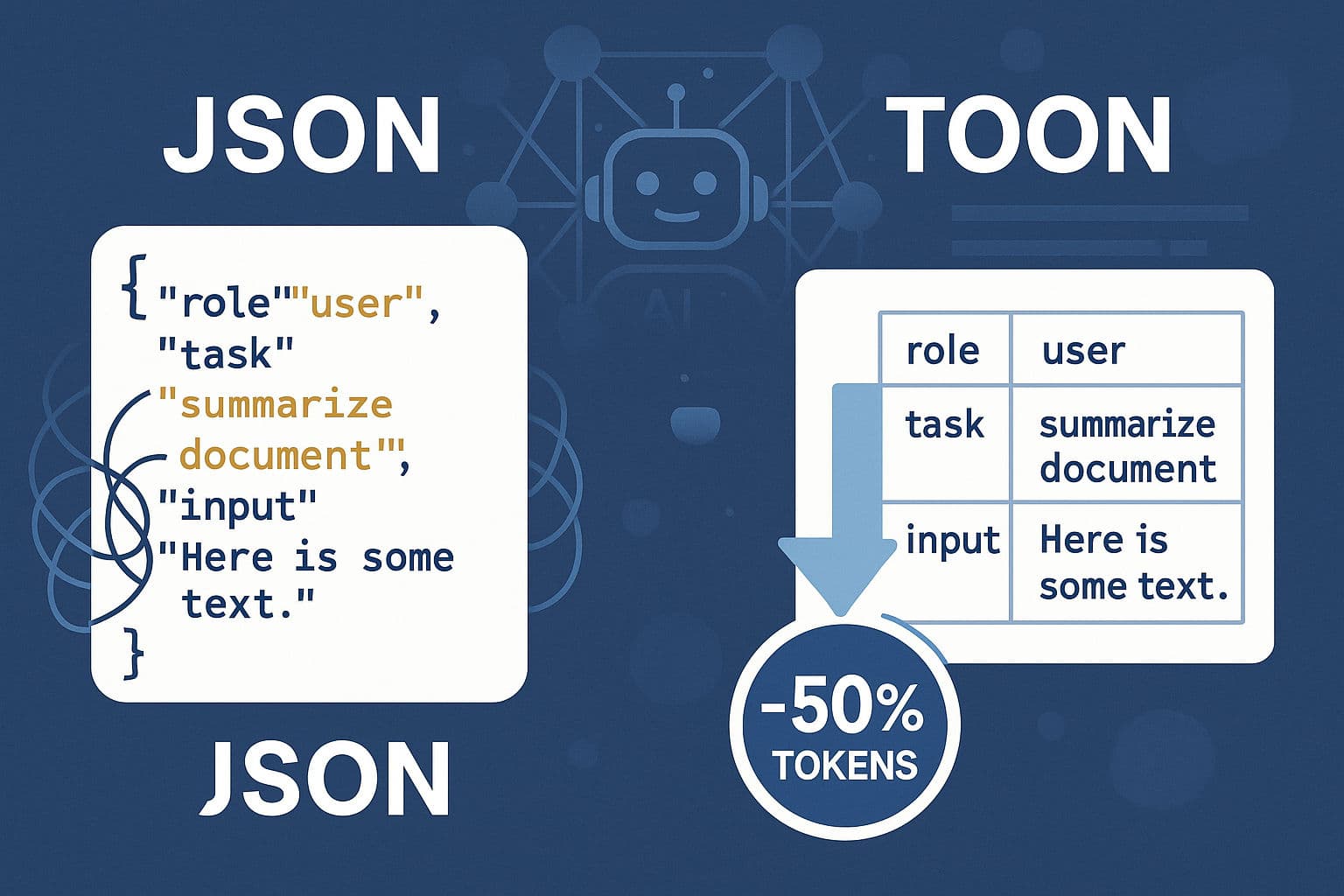

Token-Oriented Object Notation (TOON) is a compact, LLM-optimized alternative to JSON for serializing structured, mostly flat/tabular data. By removing repeated field names, quotes and redundant punctuation, TOON reduces token usage by roughly 30–60% in real-world AI workflows, leading to lower API bills, larger usable context windows, and often better model retrieval accuracy.

TL;DR

Token-Oriented Object Notation (TOON) is a compact, LLM-optimized alternative to JSON for serializing structured, mostly flat/tabular data. By removing repeated field names, quotes and redundant punctuation, TOON reduces token usage by roughly 30–60% in real-world AI workflows. Fewer tokens mean lower API bills, larger usable context windows, and often better model retrieval accuracy for RAG and prompt-injection tasks. Use TOON for lists, search results, embeddings and similar data; fall back to JSON for deeply nested or highly variable structures.

Introduction

Large Language Models are metered by tokens, not bytes. That means the serialization format you feed into an LLM directly impacts cost, available context, and—sometimes—model accuracy. JSON is ubiquitous, readable, and interoperable, but it is not token-optimized: repeated keys, quoting, and punctuation add noise that consumes valuable token budget.

TOON (Token-Oriented Object Notation) is a compact serialization format designed to minimize token footprint while remaining easily parseable by both humans and LLMs. It targets the common LLM use cases—flat/tabular payloads used in RAG, retrieval, embeddings batches, and structured prompt contexts.

Why Token Efficiency Matters

- Lower cost: Fewer tokens per API call directly reduces spend.

- More context: Fits more records into the same context window.

- Signal > noise: Compact formats reduce syntactic noise, which can improve LLM comprehension and retrieval fidelity.

- Scalability: Enables larger-scale RAG and injection without hitting token limits.

When your app repeatedly serializes search results, user lists, or embedding tables into prompts, shaving off tokens compounds quickly.

JSON vs TOON — Syntax and Token Comparison

JSON example (users list):

{

"users": [

{ "id": 1, "name": "Alice", "role": "admin" },

{ "id": 2, "name": "Bob", "role": "user" }

]

}TOON equivalent:

users[2]{id,name,role}:

1,Alice,admin

2,Bob,userMeasured token usage (example)

- JSON: ~36 tokens

- TOON: ~19 tokens Savings: ~47% on this sample.

TOON reduces repeated key tokens and removes quoting/punctuation that add little semantic value for LLMs when the structure is uniform and flat.

Real-World Benchmarks (Summary)

- Token reduction: 30–60% fewer tokens per context payload in production RAG and prompt-construction workloads.

- Context scale: Switching a search results payload from JSON (2,159 tokens) to TOON (762 tokens) enabled nearly 3× the amount of retrievable context in one model input.

- Retrieval accuracy (example RAG benchmark): 70.1% using TOON vs 65.4% using JSON in the same evaluation setup—less token noise can improve retrieval and downstream answer quality.

Note: Results will vary by data shape, prompt engineering, and model family. Benchmarks above reflect tabular/flat payloads where TOON applies.

Quickstart — Python

Install the reference package:

pip install toon_formatEncode / decode with the canonical API:

from toon_format import encode, decode, estimate_savings

data = {

"users": [

{"id": 1, "name": "Alice", "role": "admin"},

{"id": 2, "name": "Bob", "role": "user"},

]

}

# Encode to TOON

toon_str = encode(data)

print(toon_str)

# Decode back

python_obj = decode(toon_str)

# Estimate token savings

result = estimate_savings(data)

print(f"Saves {result['savings_percent']:.1f}% tokens")CLI:

# Encode JSON file -> TOON

toon input.json -o output.toon

# Decode TOON -> JSON

toon output.toon -o out.jsonWhen to Use TOON (Best Practices)

-

Use TOON for:

- Flat/tabular data (rows of uniform fields).

- Search result payloads, short user lists, embedding batches, product catalogs where fields are homogeneous.

-

Fallback to JSON when:

- Data contains deep nesting, variable record schemas, or optional fields that differ record-to-record.

- Human-readability for non-technical stakeholders is required in storage or logs.

-

Integration tip: Convert to TOON immediately before making the LLM call. Internally keep JSON/native structures for app logic and persist canonical JSON for debugging/auditing.

-

Parsing & validation: Keep a small schema/field-order descriptor with TOON payloads so decoders can recover types and optional fields deterministically.

Pitfalls & Limitations

- Schema rigidity: TOON assumes a consistent per-row schema. Highly variable records lose TOON's efficiency gains.

- Complex nested data: Hierarchies and trees are better represented in JSON/YAML.

- Human readability tradeoff: TOON is compact but less immediately readable for ad-hoc inspection—maintain dev tooling (CLI, viewers) to bridge the gap.

Conclusion

TOON is a pragmatic, low-friction way to reduce token usage where it matters most: flat, repeatable payloads fed into LLM prompts. If you operate RAG pipelines, batch-embed workflows, or otherwise include structured tabular data in prompts, TOON offers a straightforward lever to reduce cost and increase context capacity—often with secondary gains in model performance.

Try it on a representative dataset, measure token savings and retrieval metrics, and adopt TOON selectively where the format matches your data shape.