April 5, 2026

· 6 min readNetflix Uses Cassandra. Instagram Uses PostgreSQL. Twitter Uses Redis. They're All Right.

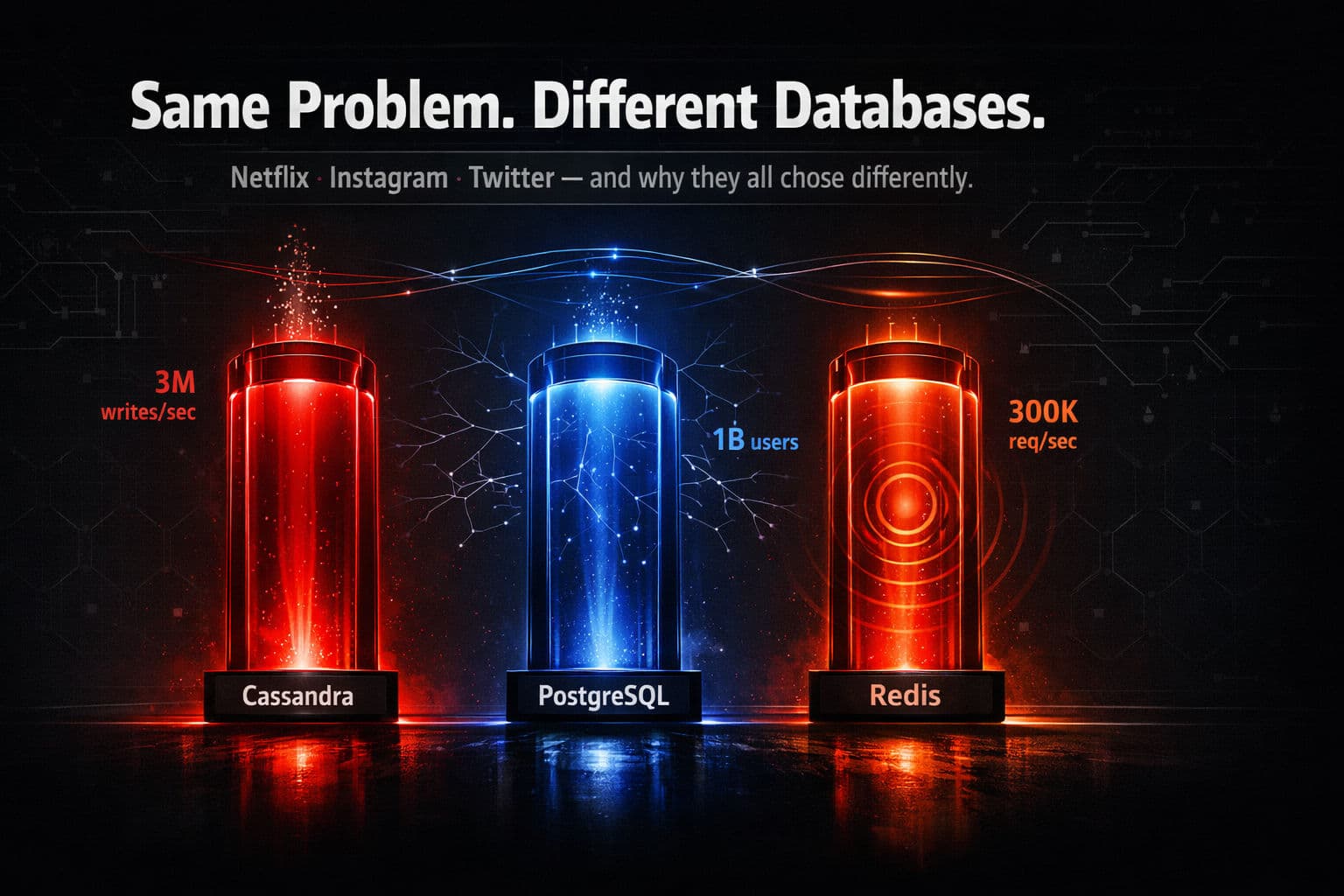

Netflix writes 3M records/sec. Instagram serves a billion users. Twitter handles 300K timeline reads/sec. Each picked a radically different database — not because one is better, but because their access patterns are fundamentally different. This post reverse-engineers all three architectures and gives you a mental framework for making these decisions yourself.

TL;DR

- Netflix → Cassandra: 3M+ writes/sec, horizontal write scale, partition-key-only access, aggressive denormalization by design.

- Instagram → PostgreSQL: Read-heavy, relational queries, PgBouncer + replicas + partitioning push it to billion-user scale.

- Twitter → Redis: Pre-built timelines served from RAM in microseconds; Redis is the cache, not the source of truth.

- The right database isn't the fastest or most scalable in the abstract — it's the one that matches your specific access pattern.

- Most systems don't need Cassandra or Redis. A well-indexed PostgreSQL handles 95% of applications at any user count.

Why this matters

Pick the wrong database early and you don't just get bad performance — you get a rewrite. The schema assumptions, query patterns, and operational model you bake in on day one are extremely expensive to undo at scale.

The mistake most engineers make is choosing a database based on brand recognition. "Netflix uses Cassandra, so we should too." That reasoning is exactly backwards. Netflix uses Cassandra because their access pattern demanded it. Yours probably doesn't.

Here's how to think about it properly — through the lens of three companies that got it right for completely different reasons.

Netflix — Cassandra for write throughput

The problem: Every user interaction on Netflix generates data. What you watched, paused, searched, hovered over, what device you're on. Across 260 million subscribers, many watching simultaneously, that's 3+ million writes per second.

Why Cassandra: At its core, Cassandra is a distributed hashmap. When you write data, it hashes your partition key, routes the write to the responsible nodes, and commits it to an in-memory structure called a memtable. No coordination with other nodes. No query planner overhead. Each write is essentially an append operation.

That's why it handles millions of writes per second. The architecture is designed to absorb write volume, not to answer arbitrary questions about it.

What Netflix gave up: Query flexibility. There are no joins in Cassandra. No ad hoc queries. No writing a quick SQL to answer a business question.

Netflix's solution is data modeling around queries, not entities. Need viewing history by user? That's one table with user_id as the partition key. Need trending content by region? That's a separate table with the same data, organized differently.

⚠️ Warning: Engineers who use Cassandra like a relational database — running multi-partition queries and secondary index lookups — routinely hit 200ms read latencies and wonder what went wrong. They're fighting the database's design, not working with it.

Yes, Netflix stores the same data multiple times. In a relational database that's a sin. In Cassandra, it's a strategy.

Could they have used PostgreSQL? For reads, absolutely — Postgres is excellent. But at 3M writes/sec, a single Postgres instance falls over. Even with connection pooling, read replicas, and a sharding layer, you're building significant complexity to replicate what Cassandra gives you natively.

Instagram — PostgreSQL for relational complexity

The problem: Instagram needs to show you a feed of posts from people you follow. Load profile pages with post and follower counts. Sort comments by time or popularity. Handle likes, follows, and DMs.

These are relational queries. A feed requires joining posts with follow relationships. Profile pages need aggregated counts across record types. Comments need sorting and pagination.

Instagram ran their entire backend on PostgreSQL for years, with over a billion users. This surprises most engineers who assume you need distributed NoSQL at that scale.

Why PostgreSQL: Instagram's workload is read-heavy. People scroll feeds far more often than they post. The queries they need — joins, aggregations, complex filtering, sorted pagination — are exactly what PostgreSQL is built for.

If they'd chosen Cassandra, every new query pattern would require a new denormalized table. A new product feature that reads data differently means a new data pipeline. With PostgreSQL, you write a new query. Done.

How they scaled it:

| Technique | Purpose |

|---|---|

| PgBouncer | Connection pooling — handles thousands of concurrent connections without overwhelming Postgres |

| Read replicas | Distributes read traffic across multiple instances |

| Table partitioning | Splits massive tables into manageable chunks |

| Targeted indexing | Ensures common queries hit indexes, never full table scans |

💡 Tip: Instagram doesn't use PostgreSQL for everything. Messaging, real-time notifications, and analytics pipelines each use different storage systems. The key is they didn't abandon Postgres for their core use case just because someone told them SQL doesn't scale.

The lesson is powerful: you can stretch any database far beyond its apparent limits with good engineering. Don't reach for distributed NoSQL prematurely just because your user count is high. If your access patterns are relational, use a relational database — and invest in making it scale.

Twitter — Redis for sub-millisecond timeline reads

The problem: When you open Twitter, you need to see the most recent tweets from everyone you follow. Instantly. Not 200ms. Not 100ms. Instantly.

A user might follow 2,000 accounts. Each of those accounts might tweet several times a day. Twitter needs to merge, rank, and serve all of that in single-digit milliseconds.

Why Redis: Redis operates entirely in memory. No disk I/O, no query parsing, no index lookups. Your timeline is a pre-built list sitting in RAM, and reading it takes microseconds.

Twitter serves timelines at 300,000 requests per second per node using a pattern called fan-out on write.

When a user tweets, Twitter doesn't wait for followers to request it. The tweet is immediately pushed into the Redis timeline cache of every follower. When you open the app, your timeline is already assembled and waiting.

⚠️ Warning: Twitter never treats Redis as the source of truth. Write to the durable store first, then replicate to Redis. Read from Redis, fall back to the durable store if Redis fails. Companies that skip this step have written postmortems titled "How we lost two days of user data". Redis by default can lose data on restart — it's designed for speed, not durability.

The celebrity edge case: What happens when an account with 80 million followers tweets? Fan-out on write means 80 million Redis list updates for a single tweet. That's prohibitively expensive.

Twitter's solution is a hybrid approach:

| Account type | Strategy |

|---|---|

| Regular users | Fan-out on write — pre-pushed to follower timelines |

| Celebrity accounts (millions of followers) | Fan-out on read — fetched in real-time and merged at request time |

There's no one-size-fits-all solution, even within a single feature.

The decision framework

Three questions determine which database to reach for:

1. What's your primary access pattern?

| Pattern | Right tool |

|---|---|

| Flexible queries, joins, aggregations | PostgreSQL |

| Massive write throughput, key-based reads | Cassandra / DynamoDB |

| Ultra-low-latency reads of precomputed data | Redis (as a cache layer) |

2. What are you willing to sacrifice?

| Database | Gives you | Costs you |

|---|---|---|

| PostgreSQL | Query flexibility, ACID guarantees | Struggles with write volume at extreme scale |

| Cassandra | Horizontal write scale | Query flexibility, ad hoc analysis |

| Redis | Microsecond read latency | Durability, storage capacity |

3. Do you actually need it?

This is the most important question of all.

A well-indexed PostgreSQL handles more load than 95% of applications will ever see. Don't choose Cassandra because Netflix uses it when your app has 10,000 users. Choose it when you have the access pattern and scale that actually demand it.

Conclusion

Netflix, Instagram, and Twitter made completely different database choices — and every one of them was correct. Not because one database beats the others, but because each team understood their access patterns, accepted the right trade-offs, and matched the tool to the problem.

That's the actual skill in system design. Not memorizing which database is fastest or most scalable in the abstract. Understanding your data, your queries, your trade-offs — and then making the match.

Start with PostgreSQL. Master it deeply. Know when you've genuinely hit its limits. And only then reach for something more complex.

FAQ

Why does Netflix use Cassandra instead of PostgreSQL?

Netflix writes 3+ million records per second. PostgreSQL, even with aggressive sharding and read replicas, cannot handle that write throughput natively. Cassandra's distributed architecture routes writes to responsible nodes via hashing, makes them as append operations with minimal overhead, and scales horizontally — all without the coordination cost of a relational system.

How does Instagram run on PostgreSQL with a billion users?

Instagram's workload is read-heavy and highly relational — feeds, follower counts, comments, sorted timelines. PostgreSQL excels at exactly these query patterns. They scaled it using PgBouncer for connection pooling, read replicas, table partitioning, and smart indexing. The lesson: NoSQL isn't required just because your user count is high.

Is Redis a database or a cache?

Redis is an in-memory data store most commonly used as a cache — not a source of truth. Twitter uses Redis to serve pre-built timelines at sub-millisecond latency, but all tweets are durably written to a separate database first. Using Redis as a primary database risks losing data on restart.

What is fan-out on write vs fan-out on read?

Fan-out on write pushes new data to every follower's cache the moment it's created. Fan-out on read assembles a timeline dynamically at request time. Twitter uses fan-out on write for regular users but switches to fan-out on read for celebrity accounts with millions of followers, because pushing one tweet to 80M Redis lists is prohibitively expensive.

How do I choose between Cassandra, PostgreSQL, and Redis?

Ask three questions: (1) What's your primary access pattern — relational joins, massive write throughput, or ultra-low-latency reads? (2) What are you willing to sacrifice — query flexibility, write scale, or durability? (3) Do you actually need a distributed system, or would a well-indexed PostgreSQL handle your real load? Most apps never outgrow a single well-tuned Postgres instance.

What does data denormalization mean in Cassandra?

In relational databases, you store each piece of data once and join tables to answer queries. In Cassandra, joins don't exist — so you store the same data multiple times, in separate tables each modeled for a specific query. This is intentional and called denormalization. It trades storage for read performance.

Can PostgreSQL scale to millions of users?

Yes. Instagram ran their entire backend on PostgreSQL for years with over a billion users. With connection pooling (PgBouncer), read replicas, partitioning, and proper indexing, PostgreSQL can handle far more load than most engineers assume. The mistake is reaching for distributed NoSQL before you've actually exhausted what a well-engineered relational system can do.